Difference: FRCVDataRepositoryHSVD (2 vs. 3)

Revision 32017-05-31 - DamianLyons

| Line: 1 to 1 | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| |||||||||||||||

| Changed: | |||||||||||||||

| < < | <meta name="robots" content="noindex" /> | ||||||||||||||

| > > |

Effect of Field of View in Stereovision-based Visual HomingD.M. Lyons, L. Del Signore, B. BarriageAbstractNavigation is challenging for an autonomous robot operating in an unstructured environment. Visual homing is a local navigation technique used to direct a robot to a previously seen location, and inspired by biological models. Most visual homing uses a panoramic camera. Prior work has shown that exploiting depth cues in homing from, e.g., a stereo-camera, leads to improved performance. However, many stereo-cameras have a limited field of view (FOV). We present a stereovision database methodology for visual homing. We use two databases we have collected, one indoor and one outdoor, to evaluate the effect of FOV on the performance of our homing with stereovision algorithm. Based on over 100,000 homing trials, we show that contrary to intuition, a panoramic field of view does not necessarily lead to the best performance, and we discuss the implications of this.Database Collection MethodologyThe robot platform used by Nirmal & Lyons [1] was a Pioneer 3AT robot with Bumblebee2 stereo-camera mounted on a Pan-Tilt (PT) base. The same platform is used to collect stereo homing databases for this paper. As in prior work [2], a grid of squares is superimposed on the area to be recorded, and imagery is collected at each grid location. We need to collect a 360 deg FOV for the stereo data at each location. This will allow us to evaluate the benefit of FOVs from 66 deg up to 360 deg. The Bumblebee2 with 3.8 mm lens has a 66 deg horizontal FOV for each camera. The PT base is used to rotate the camera to construct a wide FOV composite stereo image. Nirmal & Lyons construct this image by simply concatenating adjacent (left camera) visual images and depth images into single composite visual and depth image.This is quicker than attempting to stitch the visual images and integrate the depth images, and Nirmal & Lyons note the overlap increases feature matching a positive effect for homing. The PT base is used to collect 10 visual and depth images at orientations 36 deg apart starting at 0 deg with respect to the X axis. The RH coordinate frame has the X axis along the direction in which the robot is facing, the Y pointing left, and is centered on the robot. The final angle requires the robot to be reoriented also due to pan limits on the PT unit. The overlap in horizontal FOV (HFOV) between adjacent images is approximately 50%. Each visual image is a 1024x768 8-bit gray level image, and each depth image is a listing of the (x, y, z) in robot-centered coordinates for each point in the 1024x768 for which stereo disparity can be calculated. The visual images and depth files are named for the orientation at which they were collected. The visual images are histogram equalized, and the stereo images statistically filtered, before being stored. The grid squares for a location are numbered in row-major order i in { 0, ..., nxn } with a folder SQUARE_i containing the 10 visual and 10 depth images for each. The resolution of the grid r is the actual grid square size in meters and is used to translate any position p = (x, y) in { (0,0), ..., ((n-1)r, (n-1)r) } to its grid coordinates and hence to a SQUARE_i folder. The orientation of the robot theta is used to determine which images to select. For example, in the Nirmal & Lyons HSV algorithm, at orientation theta, the image at (theta div 36)*36 is the center image, and two images clockwise (CW) and two counterclockwise (CCW) are concatenated into a 5 image wide composite image for homing. Of course the database can be used in other ways -- the images could be stitched into a panorama for example.DatabasesThe results in this paper were produced with two stereo databases: one for an indoor, lab location (G11) and one for an outdoor location (G14). For both databases, r=0.5 m and n=4.

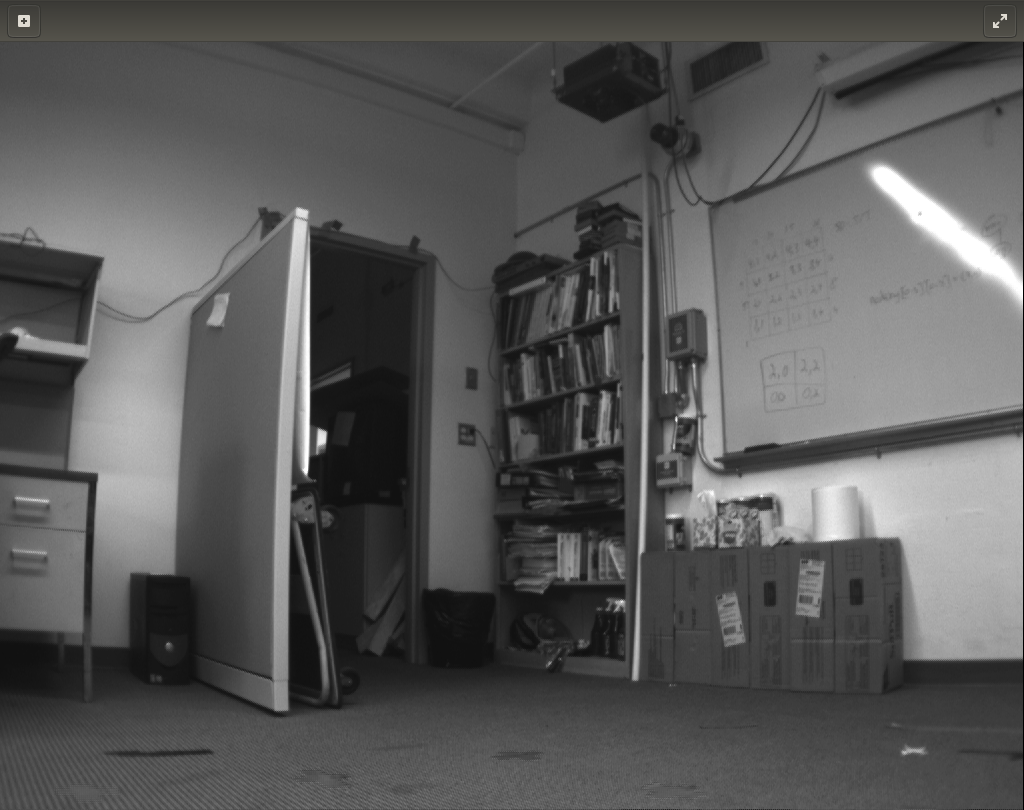

Figure 1: Grid 14 (left), Grid 11 (right), representative images

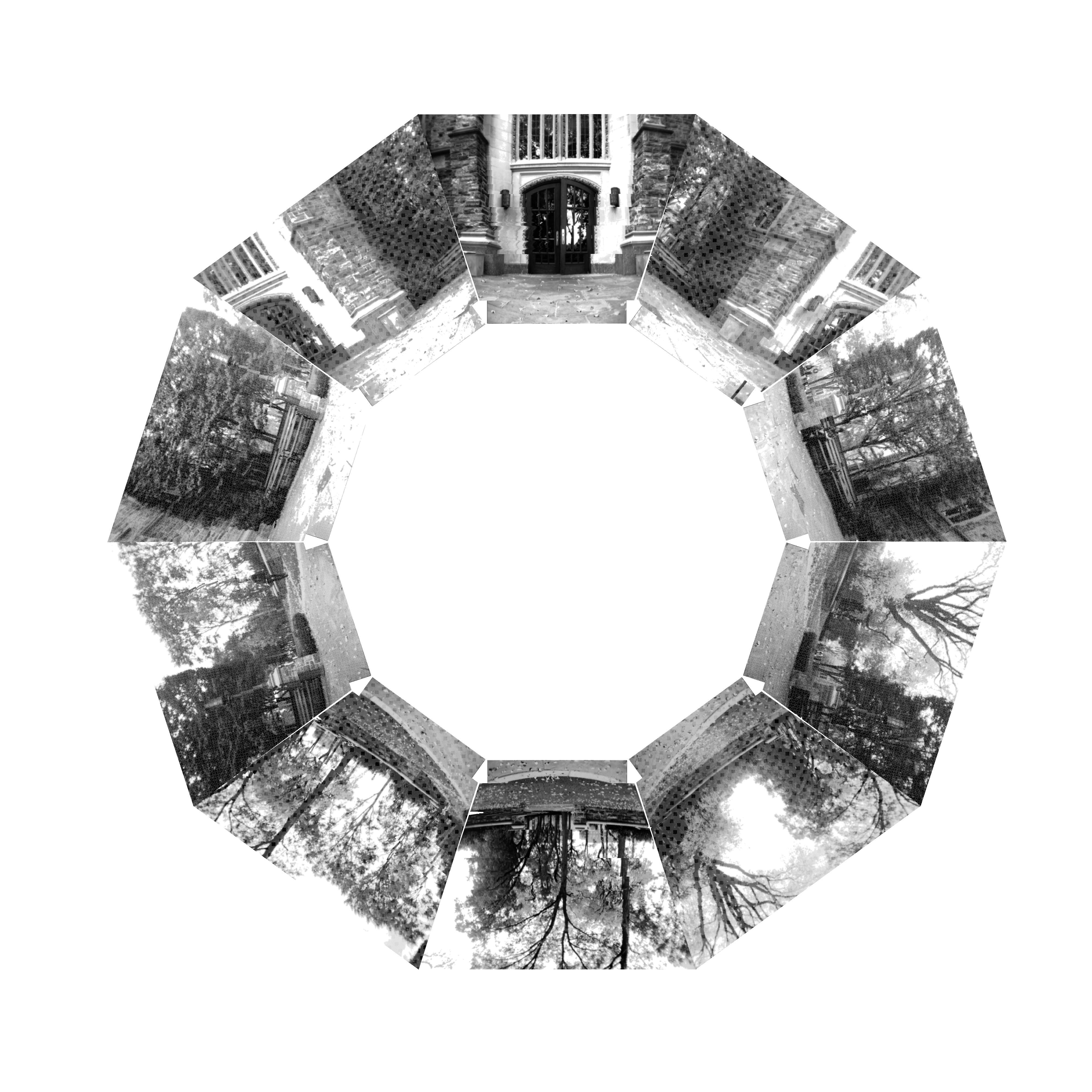

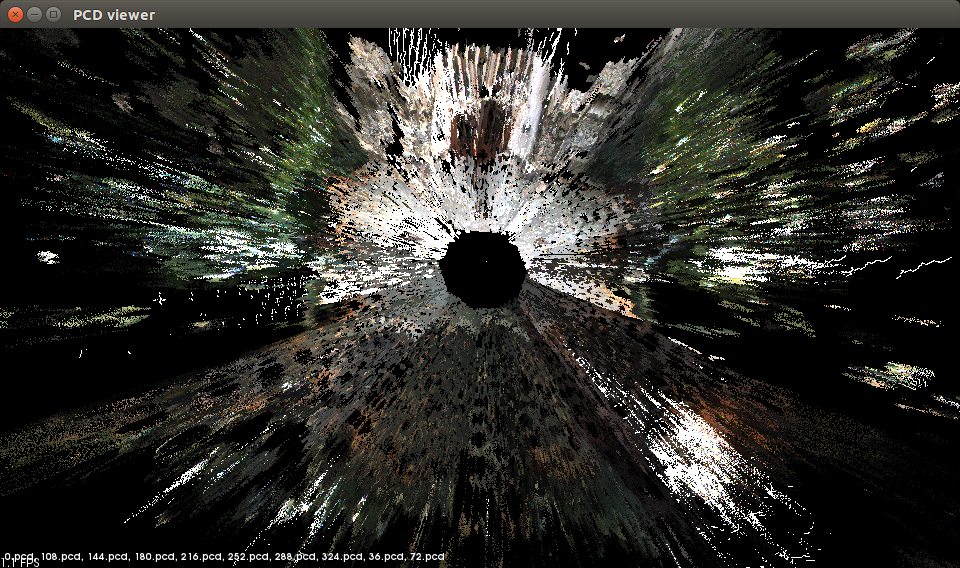

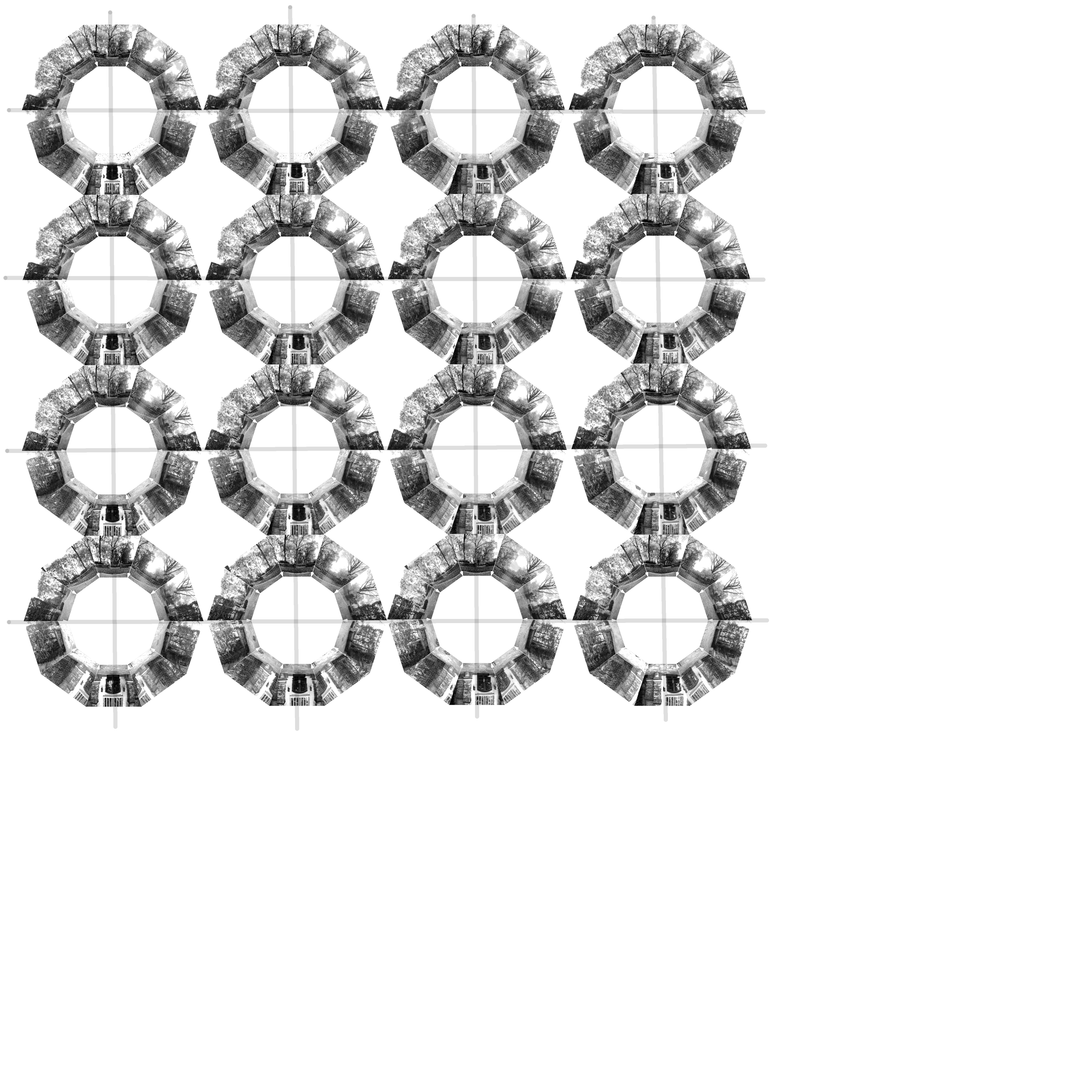

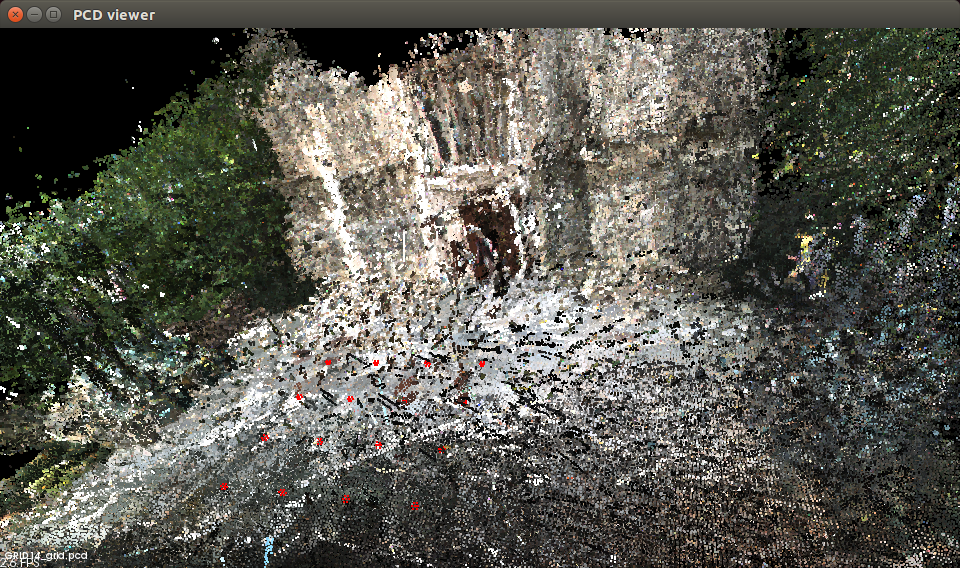

Figure 2: (a) Set of visual images displayed (keystone warped only for display purposes) at the orientation they were taken for a single square on the G14 database and (b) point cloud visualization of the stereo depth information for the same square.

Figure 3: Grid of images for the G14 location. Each square shows the 10 visual images for that grid square in the same format as Figure 2(a).

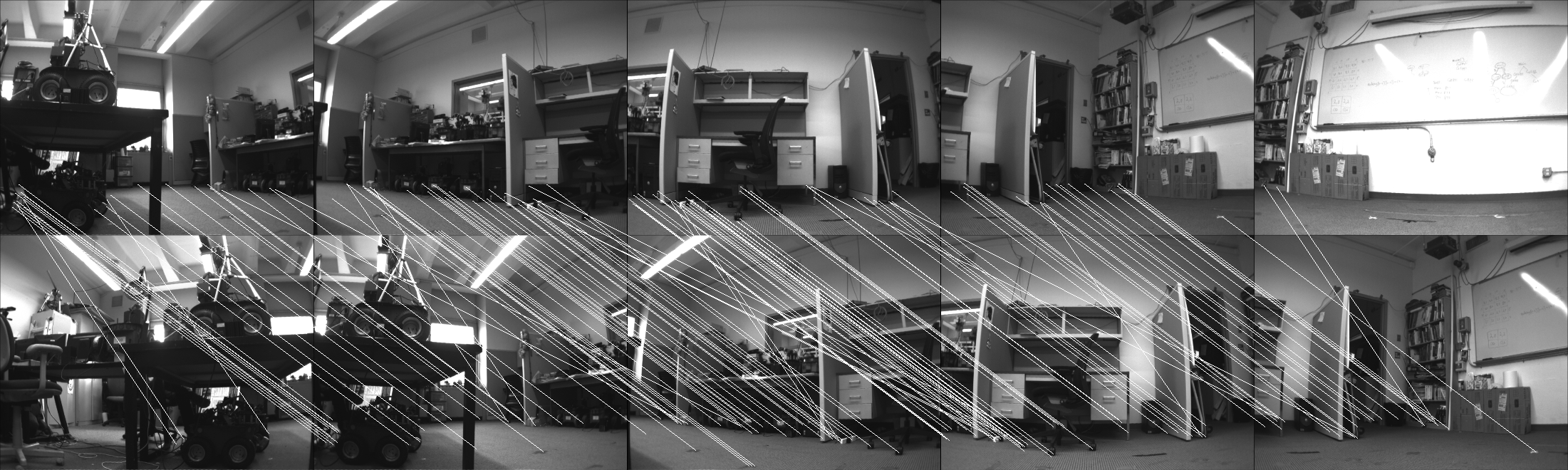

Figure 5: Wide field of view SIFT matching of home image (top) and current image (bottom) with lines between matched features (G11 database). | ||||||||||||||

Repository Information | |||||||||||||||

| Line: 23 to 93 | |||||||||||||||

| This data is provided for general use without any warranty or support. Please send any email questions to dlyons@fordham.edu, bbarriage@fordham.edu, and ldelsignore@fordham.edu. | |||||||||||||||

| Added: | |||||||||||||||

| > > | References[1] P. Nirmal and D. Lyons, "Homing With Stereovision," Robotica , vol. 34, no. 12, 2015. [2] D. Churchill and A. Vardy, "An orientation invarient visual homing algorithm," Journal of Intelligent and Robotics Systems, vol. 17, no. 1, pp. 3-29, 2012. | ||||||||||||||

Permissions* Persons/group who can change the page:* Set ALLOWTOPICCHANGE = FRCVRoboticsGroup -- (c) Fordham University Robotics and Computer Vision | |||||||||||||||

| Added: | |||||||||||||||

| > > |

| ||||||||||||||

View topic | History: r4 < r3 < r2 < r1 | More topic actions...

Ideas, requests, problems regarding TWiki? Send feedback